Linode finally introduced an internal metadata service! <3

I’m sure all of you have been in a situation where after deploying Compute Instances, it’s almost always needed to perform additional configuration before your server is ready to do any real work.

This configuration might include creating a new user, adding an SSH key, installing software, etc…it could also include more complicated tasks like configuring a web server or other software that runs on the instance. Performing these tasks manually can be error prone, slow and is not scalable.

To automate this process, Linode offers two provisioning automation tools: Metadata/cloudinit and StackScripts.

Cool, but what is Linode’s Metadata service? Well, in a nutshell it’s an API which is accessible only within your instance, nothing more 🙂

While the Metadata service is designed to be consumed by cloud-init, there are no barriers in using it without cloud-init and with any other software/script you can think of. If your software can send and read http requests, it can use Linode’s metadata service.

This allows you to use the same tools across multiple cloud providers enabling you to be really flexible on where and how you run your workloads.

One of the great use-cases for metadata is to dynamically configure your environment based on instance tags or some other parameters.

Let’s take this example; we have our dev and production environment and we want to make sure that the VM which we deployed is configured in the same way, just with different user data (test database vs a production database). Your deployment pipeline process can query the instance’s metadata service, read the tags and instantly know that it’s deploying to a test environment and which database to use.

Other use-case is for bootstrapping your servers and installing some configuration management agents like Chef or similar.

The Metadata service provides both instance data and optional user data, both of which are explained below:

- Instance data: The instance data includes information about the Compute Instance, including its label, plan size, region, host identifier, tags and more.

- User data: User data is one of the most powerful features of the Metadata service and allows you to define your desired system configuration, including creating users, installing software, configuring settings, and more. User data is supplied by the user when deploying, rebuilding, or cloning a Compute Instance. This user data can be written as a cloud-config file, or it can be any script that can be executed on the target distribution image, such as a bash script.User data can be submitted directly in the Cloud Manager, Linode CLI, or Linode API. It’s also often programmatically provided through IaC (Infrastructure as Code) provisioning tools like Terraform.

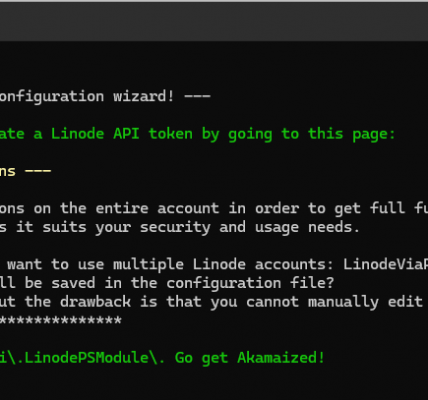

Ok, how do I use metadata service? It’s really quite simple

- Log into the instance which is running an OS image which supports metadata service

- Generate your API token using the following command

export TOKEN=$(curl -X PUT -H "Metadata-Token-Expiry-Seconds: 3600" http://169.254.169.254/v1/token)This will put the authentication token into a TOKEN variable.

3. Fetch the data

#Instance info

curl -H "Metadata-Token: $TOKEN" http://169.254.169.254/v1/instance

#Network info

curl -H "Metadata-Token: $TOKEN" http://169.254.169.254/v1/network

#User data

curl -H "Metadata-Token: $TOKEN" http://169.254.169.254/v1/user-data | base64 --decodeWhat data do I get back?

#Instance info

root@localhost:~# curl -H "Metadata-Token: $TOKEN" http://169.254.169.254/v1/instance

backups.enabled: false

host_uuid: 85815b0f16cfa8b12aa12a36530476e701111111

id: 51048111

label: jumphost-us-iad

region: us-iad

specs.disk: 25600

specs.gpus: 0

specs.memory: 1024

specs.transfer: 1000

specs.vcpus: 1

tags: app:jumphost

tags: region:us-iad

tags: stage:dev

type: g6-nanode-1

#Network info

root@localhost:~# curl -H "Metadata-Token: $TOKEN" http://169.254.169.254/v1/network

ipv4.public: 172.233.197.127/32

ipv6.link_local: fe80::f03c:93ff:fe56:3512/128

ipv6.slaac: 2600:3c05::f03c:93ff:fe56:3512/128

Cheers, Alex!